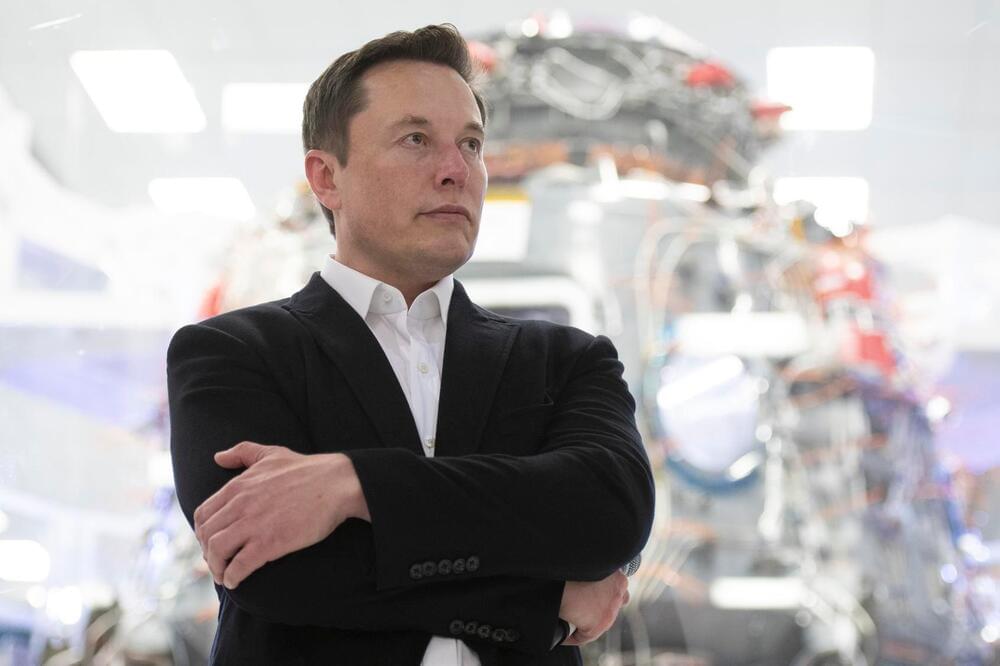

Elon Musk tweeted Tesla may get into the lithium mining and refining business directly and at scale because the cost of the metal, a key component in manufacturing batteries, has gotten so high.

“Price of lithium has gone to insane levels,” Musk tweeted. “There is no shortage of the element itself, as lithium is almost everywhere on Earth, but pace of extraction/refinement is slow.”

The Tesla and SpaceX tech boss was responding to a tweet showing the average price of lithium per tonne in the last two decades, which showed a massive increase in prices since 2021. According to Benchmark Mineral Intelligence, the cost of the metal has gone up more than 480% in the last year.