Archive for the ‘existential risks’ category: Page 8

Jul 23, 2024

Can We Burn Uranus? | Dead Planets Society Podcast

Posted by Dan Breeden in categories: asteroid/comet impacts, existential risks, physics

What would it take to set Uranus ablaze? Is it even possible to burn it in the typical sense? If anyone can figure it out, it’s the Dead Planets Society.

Join Dead Planeteers Leah and Chelsea as they invite planetary scientist Paul Byrne back to the podcast, to join in more of their chaotic antics.

Continue reading “Can We Burn Uranus? | Dead Planets Society Podcast” »

Jul 21, 2024

What is AGI and how will we know when it’s been attained?

Posted by Dan Breeden in categories: existential risks, robotics/AI

Achieving such a concept — commonly referred to as AGI — is the driving mission of ChatGPT-maker OpenAI and a priority for the elite research wings of tech giants https://fortune.com/company/amazon-com/” class=””>Amazon, https://fortune.com/company/alphabet/” class=””>Google, Meta and https://fortune.com/company/microsoft/” class=””>Microsoft.

It’s also a cause for concern https://apnews.com/article/artificial-intelligence-risks-uk-…d6e2b910b” rel=“noopener” class=””>for world governments. Leading AI scientists published research Thursday in the journal Science warning that unchecked AI agents with “long-term planning” skills could pose an existential risk to humanity.

But what exactly is AGI and how will we know when it’s been attained? Once on the fringe of computer science, it’s now a buzzword that’s being constantly redefined by those trying to make it happen.

Jul 21, 2024

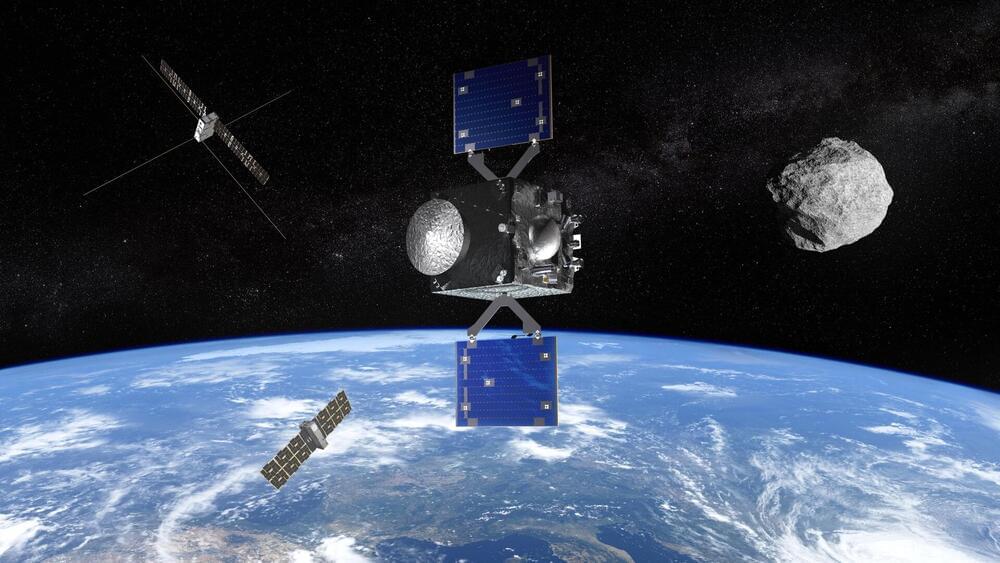

ESA supports work on Apophis mission

Posted by Saúl Morales Rodriguéz in categories: asteroid/comet impacts, existential risks

BUSAN, South Korea — The European Space Agency will allow a proposed mission to the asteroid Apophis to proceed to a next stage of development to keep it on schedule even though it is not yet fully funded.

ESA announced July 16 that its space safety program, which includes planetary defense, has given the Ramses mission permission to begin preparatory work for the mission, which is designed to visit Apophis before that asteroid makes a very close flyby of Earth in April 2029.

Ramses, or Rapid Apophis Mission for Space Safety, would use the same spacecraft bus as ESA’s Hera mission, scheduled to launch this October to visit the asteroid Didymos, whose moon Dimorphos was the target of NASA’s DART mission to deflect its orbit. Ramses will carry two cubesats for additional studies of the asteroid.

Jul 18, 2024

The Fermi Paradox: Fine Tuned Universe

Posted by Dan Breeden in categories: existential risks, media & arts

The first 150 people to join Planet Wild clicking this link or adding my code ISAAC7 later will get their first month for free https://planetwild.com/r/isaacarthur/.…

If you want to get to know them better first, check out their latest video raising baby sharks and releasing them back into the wild: https://planetwild.com/r/isaacarthur/m17

Our universe is a strange place, with underlying rules we’re only just beginning to understand, but could the strangest thing of all about our Universe be that we are able to live here to observe it in the first place?

Join this channel to get access to perks:

/ @isaacarthursfia.

Visit our Website: http://www.isaacarthur.net.

Join Nebula: https://go.nebula.tv/isaacarthur.

Support us on Patreon: / isaacarthur.

Support us on Subscribestar: https://www.subscribestar.com/isaac-a…

Facebook Group: / 1583992725237264

Reddit: / isaacarthur.

Twitter: / isaac_a_arthur on Twitter and RT our future content.

SFIA Discord Server: / discord.

Jul 17, 2024

Introducing Ramses, ESA’s mission to asteroid Apophis

Posted by Saúl Morales Rodriguéz in categories: asteroid/comet impacts, existential risks

30 years ago, on 16 July 1994, astronomers watched in awe as the first of many pieces of the Shoemaker-Levy 9 comet slammed into Jupiter with incredible force. The event sparked intense interest in the field of planetary defence as people asked: “Could we do anything to prevent this happening to Earth?”

Today, ESA’s Space Safety programme takes another step towards answering this question. The programme has received permission to begin preparatory work for its next planetary defence mission – the Rapid Apophis Mission for Space Safety (Ramses).

Ramses will rendezvous with the asteroid 99,942 Apophis and accompany it through its safe but exceptionally close flyby of Earth in 2029. Researchers will study the asteroid as Earth’s gravity alters its physical characteristics. Their findings will improve our ability to defend our planet from any similar object found to be on a collision course in the future.

Jul 13, 2024

Hanwha Aerospace Starts Production of Laser Based Anti-Aircraft Weapon Block-I

Posted by Saúl Morales Rodriguéz in categories: existential risks, military, robotics/AI

South Korea is poised to enhance its defense capabilities with the launch of a revolutionary laser-based anti-aircraft weapon. Hanwha Aerospace, a leading South Korean defense firm, has begun production following a contract signed in late June with the Defense Acquisition Program Administration (DAPA). The contract, worth KRW100 billion (USD72.5 million), mandates the delivery of the ‘Laser Based Anti-Aircraft Weapon Block-I’ systems to the Republic of Korea (RoK) Armed Forces starting later in 2024. This advanced weapon system, developed since 2019 with an investment of KRW87.1 billion (approximately USD63 million), is set to bolster South Korea’s defense against emerging threats, particularly from North Korea.

DAPA has described the Block-I system as a new-concept future weapon system that employs a laser generated from an optical fiber to neutralize targets. The weapon is engineered to accurately strike small unmanned aerial vehicles (UAVs) and multicopters at close range. This innovative technology is silent, ammunition-free, and operates solely on electricity, making it a cost-effective solution, with each firing costing about KRW2,000. The laser anti-aircraft weapon (Block-I) represents a significant advancement in our defense capabilities. If the output is improved in the future, it could become a game-changing asset on the battlefield, capable of responding to aircraft and ballistic missiles.

Dubbed the “StarWars Project,” the weapon’s development is a crucial element of South Korea’s strategy to modernize its defense systems amidst North Korea’s increasing weapons advancements. The laser beam emitted by the weapon is invisible to the human eye and produces no sound, adding to its tactical advantages. Upon deployment, South Korea will be the first country to operate this type of advanced laser weapon system, marking a significant milestone in military technology. This strategic development underscores South Korea’s commitment to maintaining a robust and modern defense posture in an increasingly complex security environment.

Jul 10, 2024

The Promise and Peril of AI

Posted by Dan Breeden in categories: biotech/medical, drones, ethics, existential risks, law, military, robotics/AI

In early 2023, following an international conference that included dialogue with China, the United States released a “Political Declaration on Responsible Military Use of Artificial Intelligence and Autonomy,” urging states to adopt sensible policies that include ensuring ultimate human control over nuclear weapons. Yet the notion of “human control” itself is hazier than it might seem. If humans authorized a future AI system to “stop an incoming nuclear attack,” how much discretion should it have over how to do so? The challenge is that an AI general enough to successfully thwart such an attack could also be used for offensive purposes.

We need to recognize the fact that AI technologies are inherently dual-use. This is true even of systems already deployed. For instance, the very same drone that delivers medication to a hospital that is inaccessible by road during a rainy season could later carry an explosive to that same hospital. Keep in mind that military operations have for more than a decade been using drones so precise that they can send a missile through a particular window that is literally on the other side of the earth from its operators.

We also have to think through whether we would really want our side to observe a lethal autonomous weapons (LAW) ban if hostile military forces are not doing so. What if an enemy nation sent an AI-controlled contingent of advanced war machines to threaten your security? Wouldn’t you want your side to have an even more intelligent capability to defeat them and keep you safe? This is the primary reason that the “Campaign to Stop Killer Robots” has failed to gain major traction. As of 2024, all major military powers have declined to endorse the campaign, with the notable exception of China, which did so in 2018 but later clarified that it supported a ban on only use, not development—although even this is likely more for strategic and political reasons than moral ones, as autonomous weapons used by the United States and its allies could disadvantage Beijing militarily.

Jul 9, 2024

Putting Black Holes Inside Stuff | Dead Planets Society Podcast

Posted by Dan Breeden in categories: asteroid/comet impacts, cosmology, existential risks, physics

Primordial black holes are tiny versions of the big beasts you typically think of. They’re so small, they could easily fit inside stuff, like a planet, or a star… or a person. So, needless to say, this has piqued the curiosity of our Dead Planeteers.

Leah and Chelsea want to know, can you put primordial black holes inside things and what happens if you do?

Continue reading “Putting Black Holes Inside Stuff | Dead Planets Society Podcast” »

Jul 7, 2024

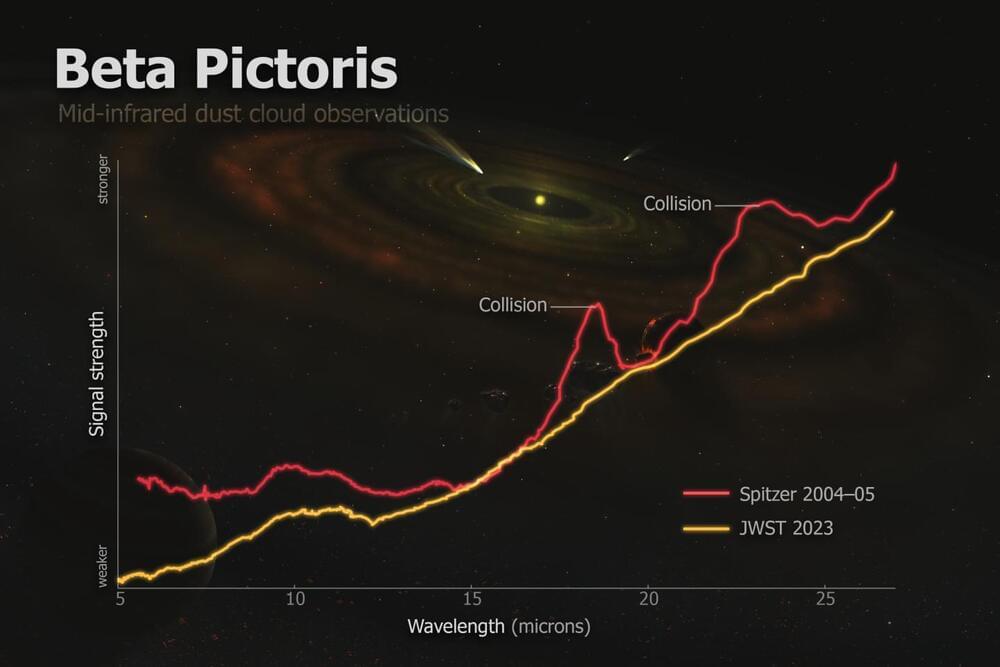

Webb Telescope reveals Asteroid Collision in Neighboring Star System

Posted by Natalie Chan in categories: asteroid/comet impacts, existential risks

Astronomers have captured what appears to be a snapshot of a massive collision of giant asteroids in Beta Pictoris, a neighboring star system known for its early age and tumultuous planet-forming activity.

The observations spotlight the volatile processes that shape star systems like our own, offering a unique glimpse into the primordial stages of planetary formation.

“Beta Pictoris is at an age when planet formation in the terrestrial planet zone is still ongoing through giant asteroid collisions, so what we could be seeing here is basically how rocky planets and other bodies are forming in real time,” said Christine Chen, a Johns Hopkins University astronomer who led the research.