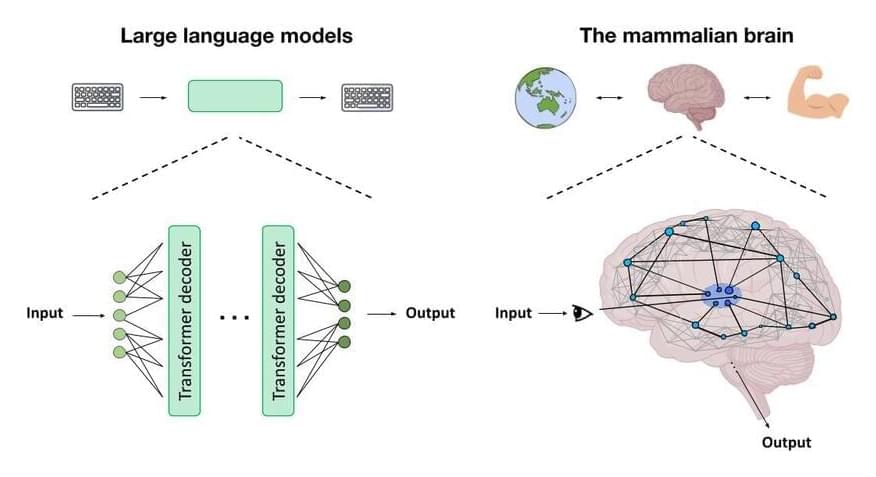

An experimental computing system physically modeled after the biological brain has “learned” to identify handwritten numbers with an overall accuracy of 93.4%. The key innovation in the experiment was a new training algorithm that gave the system continuous information about its success at the task in real time while it learned. The study was published in Nature Communications.

The algorithm outperformed a conventional machine-learning approach in which training was performed after a batch of data had been processed, producing 91.4% accuracy. The researchers also showed that memory of past inputs stored in the system itself enhanced learning. In contrast, other computing approaches store memory within software or hardware separate from a device’s processor.

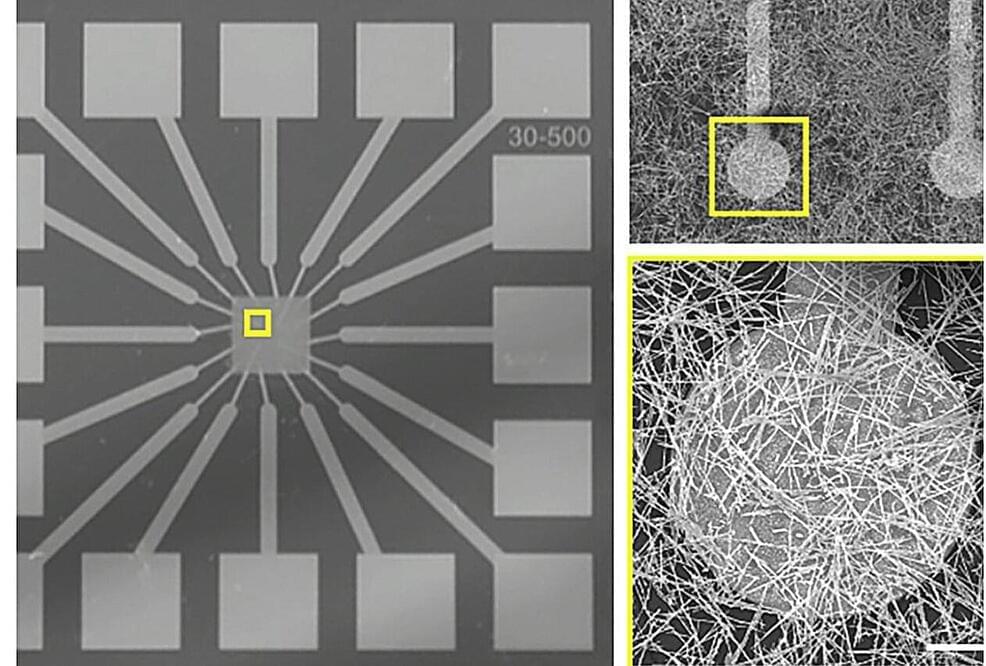

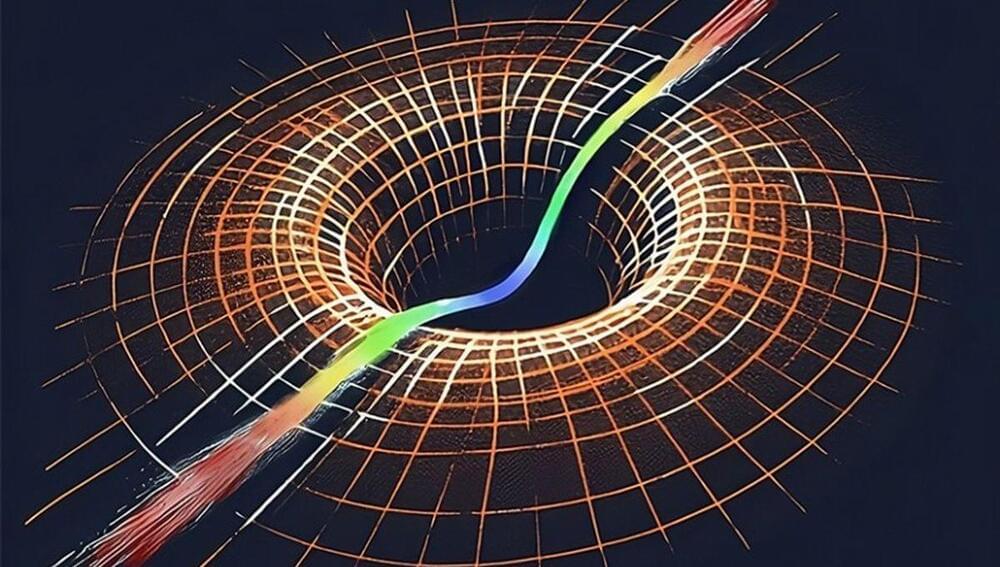

For 15 years, researchers at the California NanoSystems Institute at UCLA, or CNSI, have been developing a new platform technology for computation. The technology is a brain-inspired system composed of a tangled-up network of wires containing silver, laid on a bed of electrodes. The system receives input and produces output via pulses of electricity. The individual wires are so small that their diameter is measured on the nanoscale, in billionths of a meter.