Archive for the ‘supercomputing’ category: Page 2

Dec 27, 2024

Quantum computers in space? Google’s CEO and Elon Musk are planning a revolution

Posted by Genevieve Klien in categories: Elon Musk, quantum physics, space travel, supercomputing

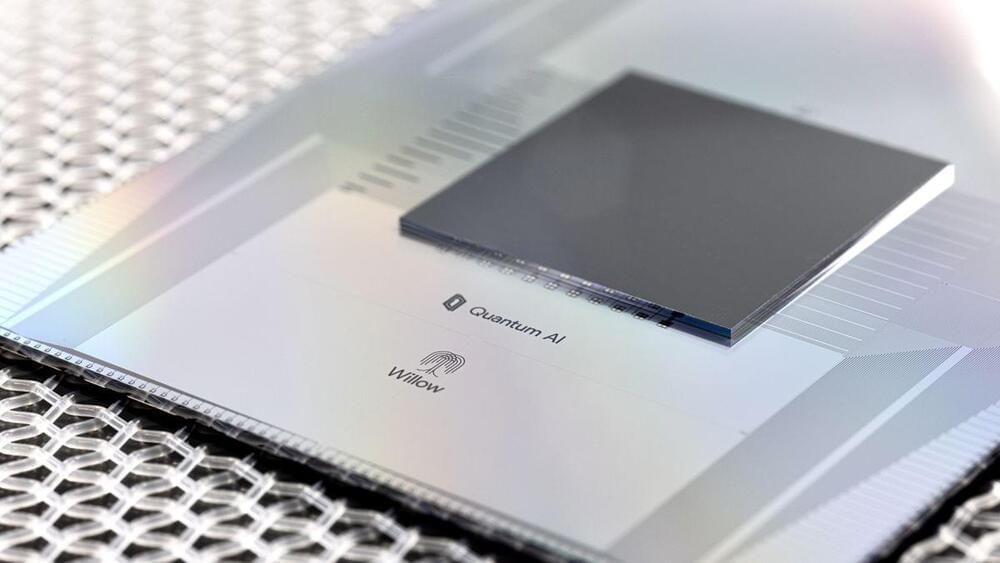

The future of technology often feels like science fiction, and a recent conversation between Sundar Pichai, CEO of Google, and Elon Musk of SpaceX proved just that. With Google unveiling its groundbreaking quantum chip Willow, a bold idea was floated—launching quantum computers into space. This visionary concept could not only transform quantum computing but also push the boundaries of modern science as we know it.

Quantum computing has long promised to solve problems far beyond the reach of traditional computers, and Google’s Willow chip seems to be delivering on that vision. In a recent demonstration, the chip completed a complex calculation in just five minutes—a task that would take classical supercomputers billions of years.

Google’s researchers describe this milestone as exceeding the known scales of physics, potentially unlocking groundbreaking possibilities in scientific research and technological development. But despite its promise, the field of quantum computing faces significant challenges.

Dec 19, 2024

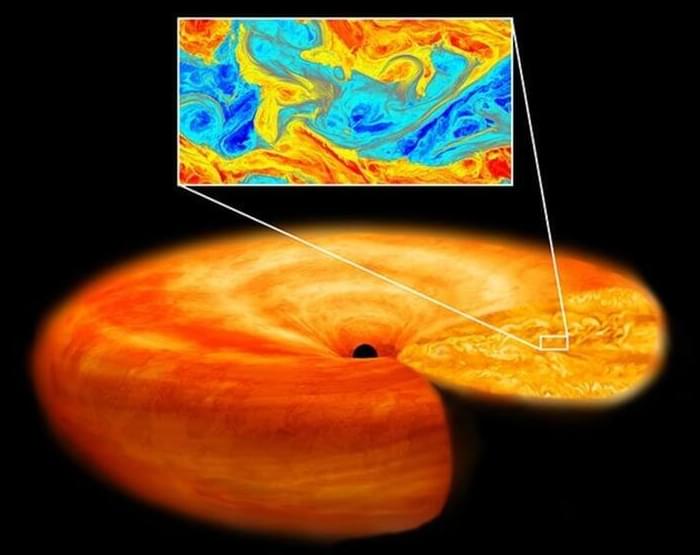

Supercomputers expose hidden inner disk dynamics of a black hole

Posted by Shubham Ghosh Roy in categories: cosmology, supercomputing

For the first time, the “inertial range connecting large and small eddies in accretion disk turbulence” was reproduced.

Black holes cannot be directly detected by ground or space-based telescopes. But the accretion disks of gas, plasma, and dust that orbit them emit detectable electromagnetic radiation, allowing astronomers to infer the presence of black holes.

Continue reading “Supercomputers expose hidden inner disk dynamics of a black hole” »

Dec 17, 2024

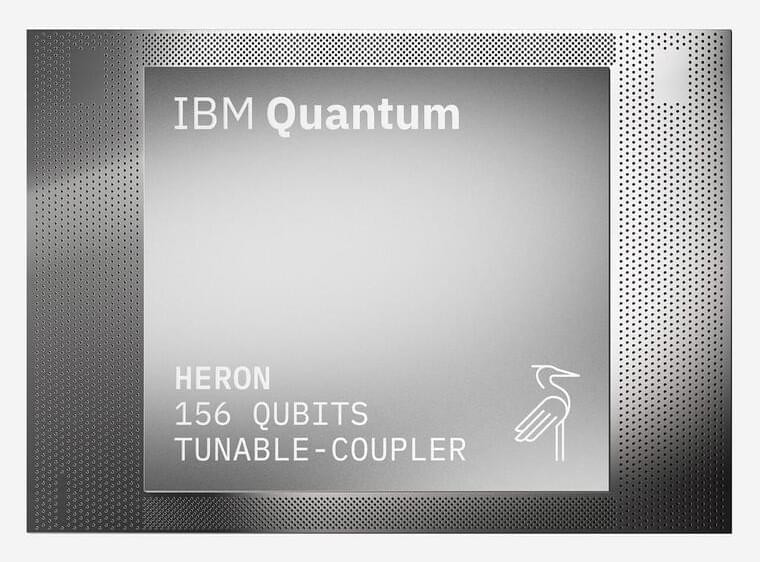

IBM and State of Illinois to Build National Quantum Algorithm Center in Chicago with Universities and Industries

Posted by Genevieve Klien in categories: information science, quantum physics, supercomputing

Anchored by next-generation IBM Quantum System Two in Illinois Quantum and Microelectronics Park, new initiative will advance useful quantum applications as industries move towards quantum-centric supercomputing.

Dec 16, 2024

Google’s Quantum Chip Sparks Debate on Multiverse Theory

Posted by Shubham Ghosh Roy in categories: cosmology, quantum physics, supercomputing

Google’s latest quantum computer chip, which the team dubbed Willow, has ignited a heated debate in the scientific community over the existence of parallel universes.

Following an eye-opening achievement in computational problem-solving, claims have surfaced that the chip’s success aligns with the theory of a multiverse, a concept that suggests our universe is one of many coexisting in parallel dimensions. In this piece, we’ll examine both sides of this argument that seems to have opened up a parallel universe of its own — with one universe of scientists suggesting the Willow experiments offer evidence of a multiverse and the other suggesting it has nothing to do with the theory at all.

According to Google, Willow solved a computational problem in under five minutes — a task that would have taken the world’s fastest supercomputers approximately 10 septillion years. This staggering feat, announced in a blog post and accompanied by a study in the journal Nature, demonstrates the extraordinary potential of quantum computing to tackle problems once thought unsolvable within a human timeframe.

Dec 15, 2024

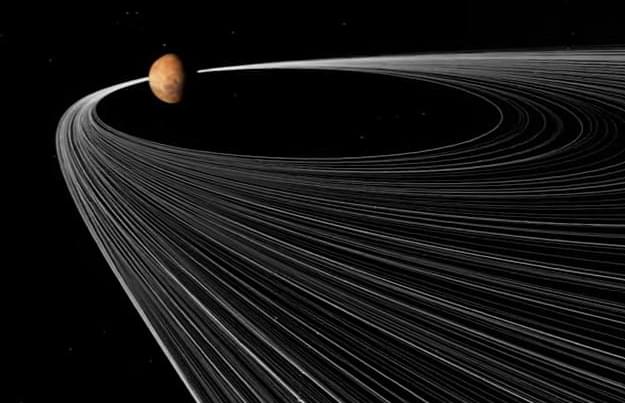

Making Mars’s Moons: Supercomputers offer ‘Disruptive’ New Explanation

Posted by Natalie Chan in categories: space, supercomputing

A NASA study using a series of supercomputer simulations reveals a potential new solution to a longstanding Martian mystery: How did Mars get its moons? The first step, the findings say, may have involved the destruction of an asteroid.

The research team, led by Jacob Kegerreis, a postdoctoral research scientist at NASA’s Ames Research Center in California’s Silicon Valley, found that an asteroid passing near Mars could have been disrupted—a nice way of saying “ripped apart”—by the red planet’s strong gravitational pull.

The paper is published in the journal Icarus.

Dec 14, 2024

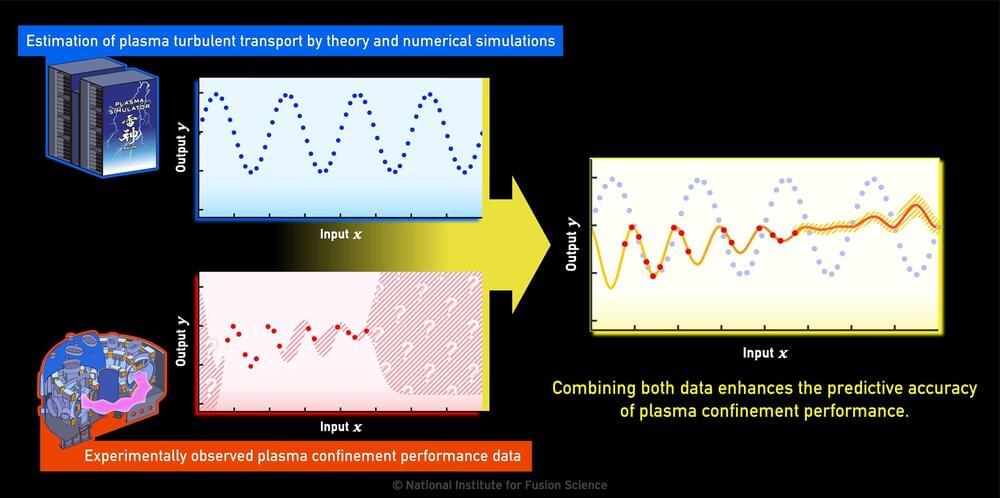

Multi-fidelity modeling boosts predictive accuracy of fusion plasma performance

Posted by Saúl Morales Rodriguéz in categories: engineering, nuclear energy, particle physics, supercomputing

Fusion energy research is being pursued around the world as a means of solving energy problems. Magnetic confinement fusion reactors aim to extract fusion energy by confining extremely hot plasma in strong magnetic fields.

Its development is a comprehensive engineering project involving many advanced technologies, such as superconducting magnets, reduced-activation materials, and beam and wave heating devices. In addition, predicting and controlling the confined plasma, in which numerous charged particles and electromagnetic fields interact in complex ways, is an interesting research subject from a physics perspective.

To understand the transport of energy and particles in confined plasmas, theoretical studies, numerical simulations using supercomputers, and experimental measurements of plasma turbulence are being conducted.

Dec 13, 2024

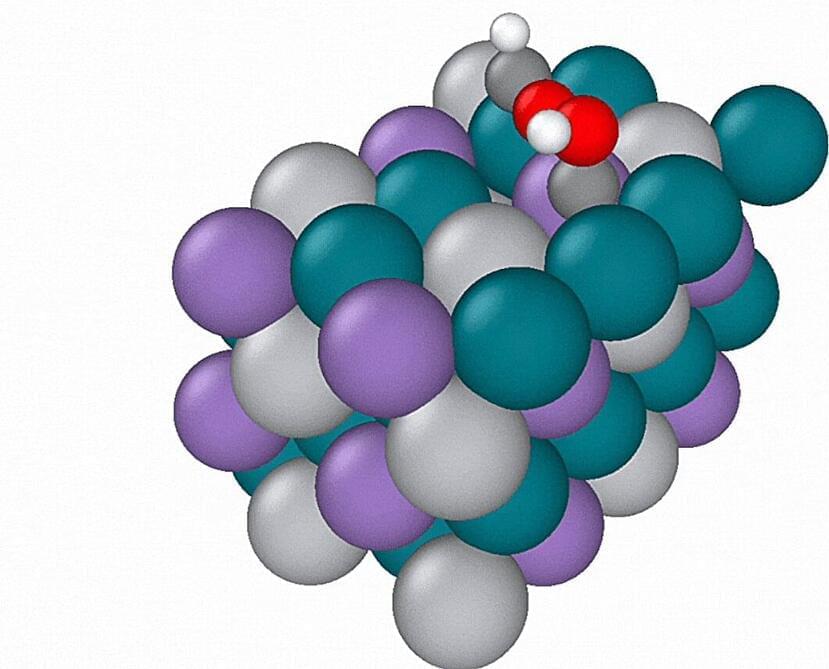

‘AI-at-scale’ method accelerates atomistic simulations for scientists

Posted by Shubham Ghosh Roy in categories: quantum physics, robotics/AI, supercomputing

Quantum calculations of molecular systems often require extraordinary amounts of computing power; these calculations are typically performed on the world’s largest supercomputers to better understand real-world products such as batteries and semiconductors.

Now, UC Berkeley and Lawrence Berkeley National Laboratory (Berkeley Lab) researchers have developed a new machine learning method that significantly speeds up atomistic simulations by improving model scalability. This approach reduces the computing memory required for simulations by more than fivefold compared to existing models and delivers results over ten times faster.

Their research has been accepted at Neural Information Processing Systems (NeurIPS) 2024, a conference and publication venue in artificial intelligence and machine learning. They will present their work at the conference on December 13, and a version of their paper is available on the arXiv preprint server.

Dec 13, 2024

Google ‘Willow’ quantum chip has solved a problem the best supercomputer would have taken a quadrillion times the age of the universe to crack

Posted by Shailesh Prasad in categories: quantum physics, supercomputing

Google’s new 105-qubit quantum processor has surpassed a key milestone first proposed in 1995.

Dec 12, 2024

Pete Shadbolt at MIT EmTech: Building the World’s First Useful Quantum Computer

Posted by Dan Breeden in categories: quantum physics, supercomputing

Quantum computers hope to excel at solving problems that are too large, complex, or cumbersome for even the most powerful supercomputers, but many hurdles remain before they can be reliably put to commercial use. Here, we share an update on PsiQuantum’s approach, and recent progress towards useful, large-scale machines.

PsiQuantum co-founder \& Chief Scientific Officer Pete Shadbolt presents at the 2024 MIT EmTech conference in Cambridge, MA.